In today’s digital landscape, ensuring that your website’s infrastructure is fully optimized is more important than ever. While engaging content and strategic link building remain critical, underlying technical elements can make or break your search performance. A thorough technical SEO audit uncovers hidden obstacles affecting crawlability, indexation, and user experience—factors that search engines like Google use to determine rankings. By systematically diagnosing and fixing these issues, you pave the way for improved organic visibility, increased traffic, and a seamless visitor journey.

Currently, technical factors such as page speed, mobile-friendliness, and secure protocols are gaining prominence as ranking signals. This year (2026), Google emphasizes Core Web Vitals, security compliance, and responsive design in its algorithm. Whether you are an SEO veteran or a business owner looking to perform a DIY review, this comprehensive guide will walk you through each stage of a technical SEO audit. We’ll explore best-in-class tools, proven methodologies, and actionable recommendations to strengthen your site’s foundation. By the end of this article, you’ll have a clear roadmap for a successful technical SEO audit that aligns with authoritative standards and drives sustainable results.

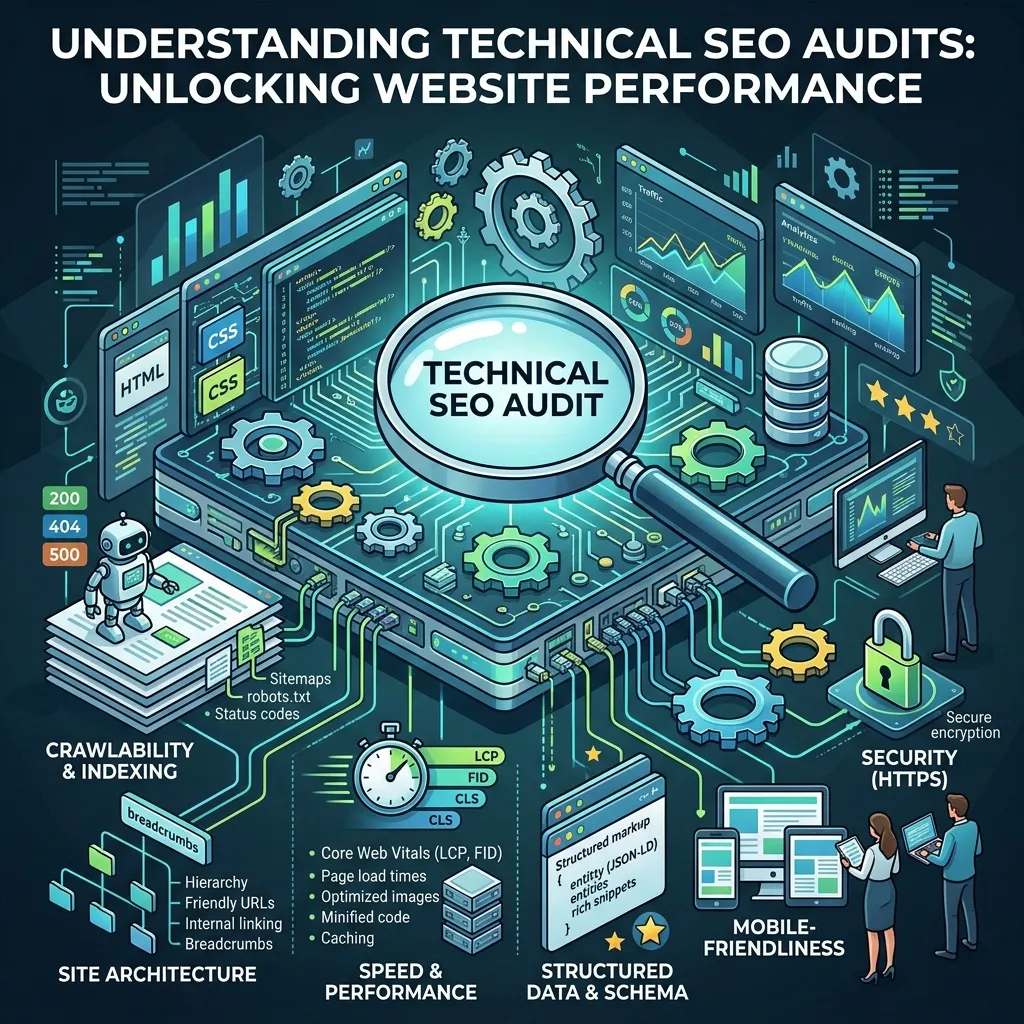

Understanding Technical SEO Audits

A technical SEO audit is the process of evaluating a website’s backend infrastructure and performance metrics that influence search engine crawlers. Unlike content-focused approaches, this audit dives into server configurations, site architecture, code quality, and performance indicators. The primary goal is to ensure that bots can access, render, and index pages without encountering errors or inefficiencies.

Performing a robust audit involves multiple stages. You begin by collecting baseline data—performance scores, crawl logs, indexation status—then identify anomalies and bottlenecks. A well-structured audit not only highlights existing problems but also prioritizes them based on impact and effort required for resolution.

Key Components of a Technical Audit

- Crawlability & Indexation: Verifying that search engines can discover and record your pages.

- Site Structure & URL Design: Ensuring a logical hierarchy and user-friendly links.

- Performance Optimization: Improving load speeds, Core Web Vitals, and resource efficiency.

- Security & Protocols: Implementing HTTPS, security headers, and up-to-date certificates.

- Mobile Readiness: Confirming a responsive layout and smooth mobile interactions.

By focusing on these critical areas, you lay the groundwork for a site that not only ranks higher but also delivers a superior user experience.

Essential Tools and Resources

Choosing the right toolkit is vital for an efficient technical SEO audit. Today’s market offers a variety of desktop crawlers, performance scanners, and log analyzers that streamline the process. Below, we outline some of the most trusted solutions used by industry experts.

Google Search Console

Google Search Console provides invaluable insights into coverage issues, mobile usability, and Core Web Vitals. Use the Google Search Central reports to monitor indexing status and identify critical errors that may hinder your site’s visibility.

Screaming Frog SEO Spider

This desktop crawler can scan thousands of pages quickly, revealing broken links, redirect chains, missing metadata, and more. Its comprehensive reporting capabilities make it a staple for professional auditors.

Page Speed Testing Tools

Tools like Google PageSpeed Insights, GTmetrix, and Lighthouse evaluate performance metrics such as First Contentful Paint, Largest Contentful Paint, and Total Blocking Time. These platforms offer optimization suggestions—image compression, resource minification, and efficient caching strategies.

Log File Analyzers

Analyzing server logs helps you understand how crawlers interact with your site in real time. Solutions like Screaming Frog Log File Analyzer or Splunk allow you to pinpoint errors and optimize your crawl budget.

Mobile and Accessibility Checkers

Ensuring responsive design and touch-friendly elements is critical for mobile-first indexing. Reference the W3C Media Queries specification when testing viewport settings and responsive breakpoints.

Equipped with these resources, you can capture a comprehensive picture of your site’s technical health.

Optimizing Crawlability, Indexation, and Site Structure

Search engines first need to discover your pages before ranking them. Issues that block bots can severely limit your organic reach. Below are the core steps to secure optimal crawlability and indexation.

Reviewing Robots.txt and XML Sitemaps

The robots.txt file tells bots which directories to avoid. Ensure you’re not inadvertently blocking vital sections of your site. Simultaneously, maintain an accurate XML sitemap that lists only canonical, non-noindexed URLs. This acts as a roadmap for crawlers to locate your most important content.

Analyzing Server Response Codes

Monitor 200 (OK), 301 (Permanent Redirect), 302 (Temporary Redirect), 404 (Not Found), and 5xx (Server Error) responses. Excessive 404s or chains of redirects can waste crawl budget and degrade user experience. Use your crawler to detect these anomalies and implement proper 301 redirects or fix broken links.

Designing an Intuitive Site Hierarchy

A shallow, logical structure helps both users and bots navigate your site efficiently. Aim for no page to be more than three clicks away from the homepage. Group related content into clear silos with keyword-rich categories and subdirectories.

URL Best Practices

Create concise, descriptive URLs that include relevant keywords. Avoid long query strings and use hyphens instead of underscores to separate words. Consistency in URL structure minimizes confusion and improves crawl efficiency.

By implementing these best practices, you enable search engines to explore your site comprehensively, leading to more pages indexed and better overall performance.

Enhancing Performance, Security, and Mobile Usability

High-performance, secure, and mobile-friendly sites rank better and keep users engaged. Let’s delve into the critical factors you need to address.

Improving Page Speed

Page load time directly impacts bounce rates and rankings. Prioritize optimizing images with modern formats (WebP), deferring offscreen resources, and implementing lazy loading. Minify HTML, CSS, and JavaScript to reduce file sizes. Consider a Content Delivery Network (CDN) to serve content from locations closest to users.

Securing Your Website with HTTPS

HTTPS is now a standard ranking factor. Migrate your entire site to SSL/TLS and fix any mixed content errors where HTTP assets load on secure pages. Implement HTTP/2 to benefit from multiplexing and header compression. Don’t forget security headers like HSTS, X-Content-Type-Options, and X-Frame-Options.

Optimizing for Mobile-First Indexing

With Google’s mobile-first indexing fully implemented, ensure that your mobile design mirrors the desktop version in terms of content and metadata. Validate responsive layouts, check touch targets, and confirm viewport configurations. Use the Mobile-Friendly Test in Google Search Console to spot issues.

Monitoring Core Web Vitals

Core Web Vitals metrics—LCP, FID, and CLS—measure real-world user experience. Continuously monitor these values in the Search Console’s Core Web Vitals report and implement fixes like preloading key resources or using font-display strategies for faster rendering.

Addressing these elements not only boosts rankings but also delivers a smooth, trustworthy experience for every visitor.

Implementing Schema Markup, Link Auditing, and Best Practices

Structured data and healthy links amplify your site’s visibility and authority. In this section, we cover how to enhance rich results and maintain link integrity.

Adding Structured Data

Schema markup helps search engines understand context and can trigger enhanced listings like FAQs, breadcrumbs, and product snippets. Use JSON-LD format and validate your implementations with Google’s Rich Results Test. Refer to the Schema.org guidelines to choose the right types for your content, from articles and events to recipes and reviews.

Auditing Internal and External Links

Broken or orphaned pages hurt user navigation and waste crawl budget. Use tools like Screaming Frog to detect 404 errors and redirect loops. Regularly review external links to authoritative sources for accuracy and relevance, helping to build trust and improve E-A-T signals.

Fixing Duplicate Content

Duplicate content confuses search engines and dilutes crawl efficiency. Address this by implementing canonical tags, employing 301 redirects, or setting up meta robots noindex directives on secondary pages.

Scheduling Regular Audits

Technical SEO is not a one-off task. Schedule quarterly audits to catch emerging issues early. Automate reports in Search Console and third-party platforms to stay proactive. By tracking changes over time, you can measure the impact of your optimizations and adjust your strategy accordingly.

Combining structured data, link hygiene, and ongoing monitoring ensures that your site remains robust, authoritative, and primed for growth.

Frequently Asked Questions: Technical SEO Audit

Conclusion

Conducting a comprehensive technical SEO audit is foundational to achieving long-term organic success. By thoroughly examining crawlability, indexation, performance, security, mobile usability, structured data, and link health, you can eliminate barriers that impede both search engines and users. Leveraging the tools and techniques covered above—ranging from Google Search Console and Screaming Frog to performance scanners and log analyzers—enables you to pinpoint issues efficiently and remediate them based on priority.

In today’s fast-paced digital environment, staying proactive with regular audits and adhering to best practices is key to maintaining visibility and trust. Implementing these strategies will not only elevate your search rankings but also deliver a superior experience for every visitor. Embrace a structured approach, collaborate cross-functionally, and monitor your progress closely. A healthier site architecture and optimized performance will set the stage for sustained growth, higher conversions, and measurable SEO gains.